In my final (fourth) year project module, I decided to continue in my interest of machine learning, but this time recurrent models. Recurrent models tackle sequence problems including fields like natural language processing, computer vision and healthcare.

Model

There are many different recurrent models out there, the most popular being LSTMs. In my work I decided to take this as the baseline model and compare it to some of the work on more cutting edge models on a variety of problem areas.

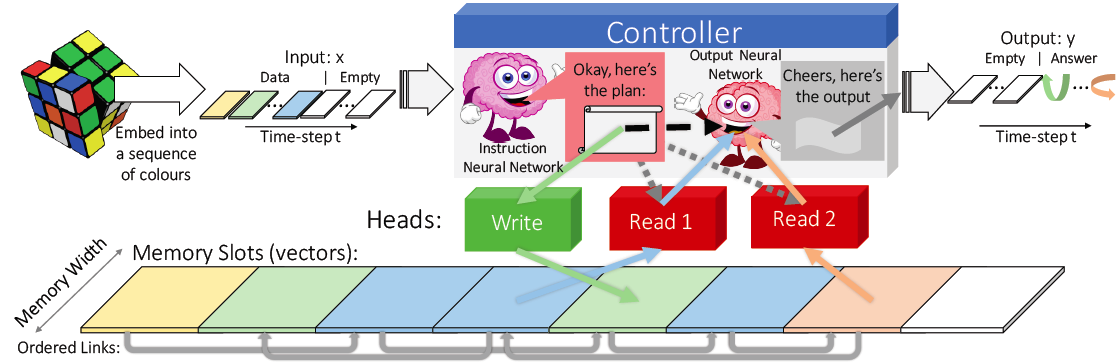

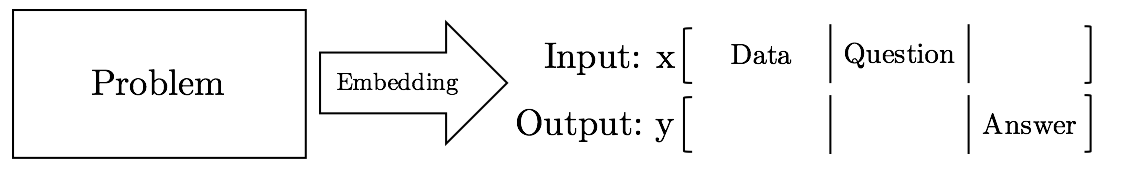

Differential Neural Computers (DNC) bridged Turing Machines using deep neural networks to train an algorithm. It begins by embedding the problem sequentially for feeding iteratively to a controller that executes operations on internal state before regressing output. The controller can be feed-forward or recurrent neural network with optimisation being an open problem we investigated.

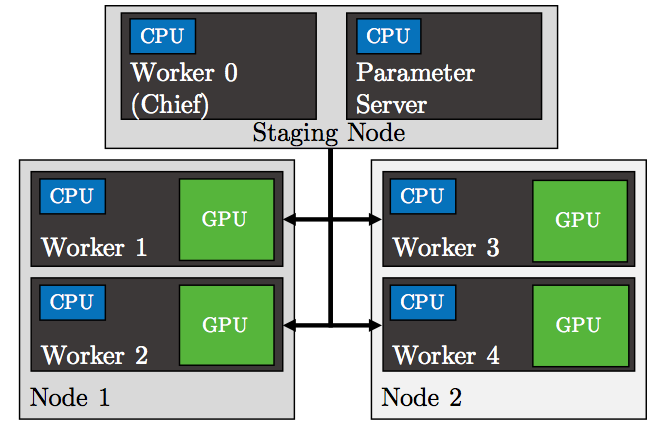

I optimised the model using classic regularisations like L1/L2 and dropout, scaling over distributed GPUs for maximum training rate and investigated modifications to the memory lookup for different tasks. The DNC was optimised for data structure and real world problems demonstrating versatility. In some cases the DNC outperformed an LSTM reaching perfect (100%) accuracy and visualisations validated these inner mechanisms.

The models were compared on a variety of tasks including but not limited to:

- Natural Language Processing – Sentiment Analysis

- Data Structure Tasks (Copy, Lookup, Repeat Copy, Reverse, Graphs, etc)

- Rubiks Cube

- MNIST Image Recognition

Implementation

The system was implemented in Python using the Tensorflow machine learning library and ran on a high performance GPGPU cluster.

Conclusion

The work validated and optimised the DNC against state of the art, highlighting merits of each. I visualised the inner workings of the models and explored innovative ideas to improve the models.

Paper & Poster

Principles and Applications of Differential Neural Computers: