For my third year project in 2015, I wanted to explore machine learning through application to a real world problem.

Facial Keypoints Problem

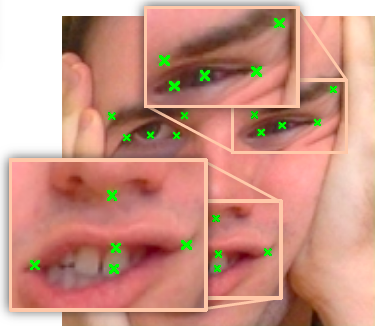

The facial keypoints problem stems from a branch of computer vision for detecting point of interest locations. A competition was standardised at kaggle.com as the ‘Kaggle Facial Keypoints Challenge‘, benchmarking researchers from around the world in a leaderboard. Potential applications include: emotion tracking, biometric analysis, generalised keypoint detection and medical diagnosis.

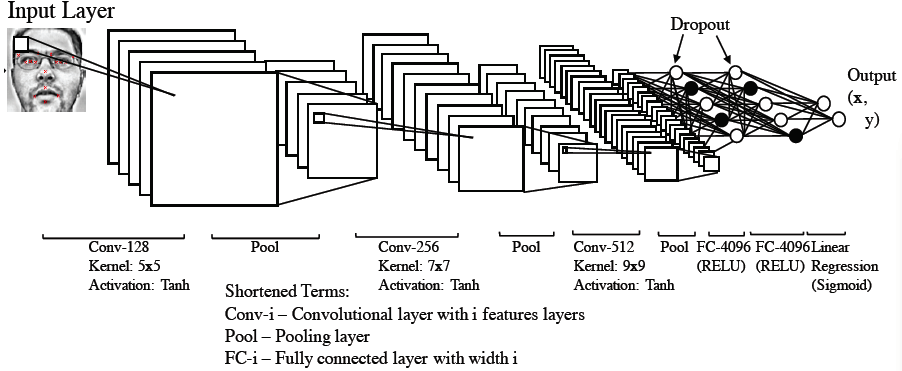

Model

I implemented a convolutional neural network architecture (CNN), made powerful by its translation invariant feature detection. I explored depth, as it was shown to represent higher level features; and have reduced error in similar architectures. Regularisation was used to avoid over-fitting, where the model fits the training data over the general case. L1 & L2 regularise network weights; dropout uses natural phenomena to add randomisation to the networks; early stopping prevents over-training.

The quality of images vary, ranging from over-exposure to rotations, tackled by a range of image processing techniques. Image augmentations like rotations increased size and diversity of our training set.

Results

Random grid search helped quickly optimise parameter configurations with the help of 3D graphs. The model reached 2nd on the worldwide leaderboard and settled into a final position of 6th over a year later.

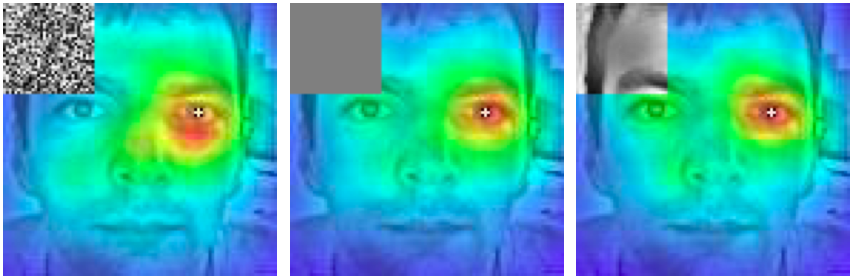

Heat map visualisations gave an insight into how the model learned, where I investigated an occlusion algorithm, comparing censors and developing a novel extension that prevents additional edges incurred by the censor itself.

Conclusion

The results validated the CNN model for facial keypoints detection. Fast propagations proved useful when applied to time critical applications, like real time video systems. Regularisation and pre-processing methods helped in reducing error. Our novel improvement to the visualisation algorithm showed promise for better understanding models. Future research could entail scaling down the model to a more portable machine and reducing error for outlier cases.

Implementation

The system was implemented in Python using the Theano machine learning library and ran on a high performance GPGPU.

Paper & Poster

Optimising Facial Keypoint Detection With Deep Learning: